Nested virtualization provides tremendous value for virtualization administrators whether running in VMware or now with Hyper-V environments running Windows Server 2016 as base for the Hyper-V role. Nested virtualization brings to the table many benefits including hypervisor hosted containers, dev/test environments, and pure lab or training environments that do well inside a nested environment. With VMware and Hyper-V what are the differences in running nested environments on top of both hypervisors? What configuration is involved with each platform to enable nested virtualization? Let’s take a look at VMware vs Hyper-V Nested Virtualization and see the similarities and differences between both platforms as related to providing a nested virtualization environment.

Why Nested Virtualization?

Nested virtualization provides a very interesting bit of “inception” when thinking about running workloads. When running nested virtualization, you are installing a hypervisor platform inside another hypervisor platform. So you can have a virtual machine hosted on a hypervisor that is running as a virtual machine on top of an existing hypervisor loaded on a physical server. This creates very interesting use cases and possibilities when it comes to spinning up hypervisor resources and affords virtualization administrators yet another tool that enables solving real business problems with techniques in the virtual environment. This includes provisioning dev/test environments with hypervisors mimicking production. However, aside from the dev/test or lab environment that has commonly been the use case with “nested” hypervisor installations, there are some real world production use cases for running nested virtualization from both a VMware and Hyper-V perspective that we want to consider. Let’s take a look a comparison of enabling nested virtualization from a VMware and Hyper-V perspective as well as the differences between the two.

VMware vs Hyper-V Nested Virtualization

VMware has certainly been in the nested virtualization game for much longer than Hyper-V as Microsoft has only now released nested virtualization as a “thing” in Windows Server 2016 with Hyper-V. There are certainly similarities between the VMware and Hyper-V implementation of nested virtualization as to the requirements, especially in some of the virtual machine configuration required to make nested virtualization possible. We will compare the following:

- Processor requirements and extensions presented to the virtual machine

- Network requirements for connectivity to the production network

- Configuring a virtual machine for nested virtualization in both platforms

- Production use cases for nested virtualization

VMware vs Hyper-V Processor Requirements for Nested Virtualization

Both VMware and Hyper-V require the processors used for nested virtualization are enabled with the Intel VT virtualization technology or AMD-V virtualization technology. This is the hardware assisted technology that is provided from both processor vendors. A note here is that with Hyper-V, nested virtualization is not supported using AMD processors at least in any documentation that I have been able to find stating to the contrary as of yet.

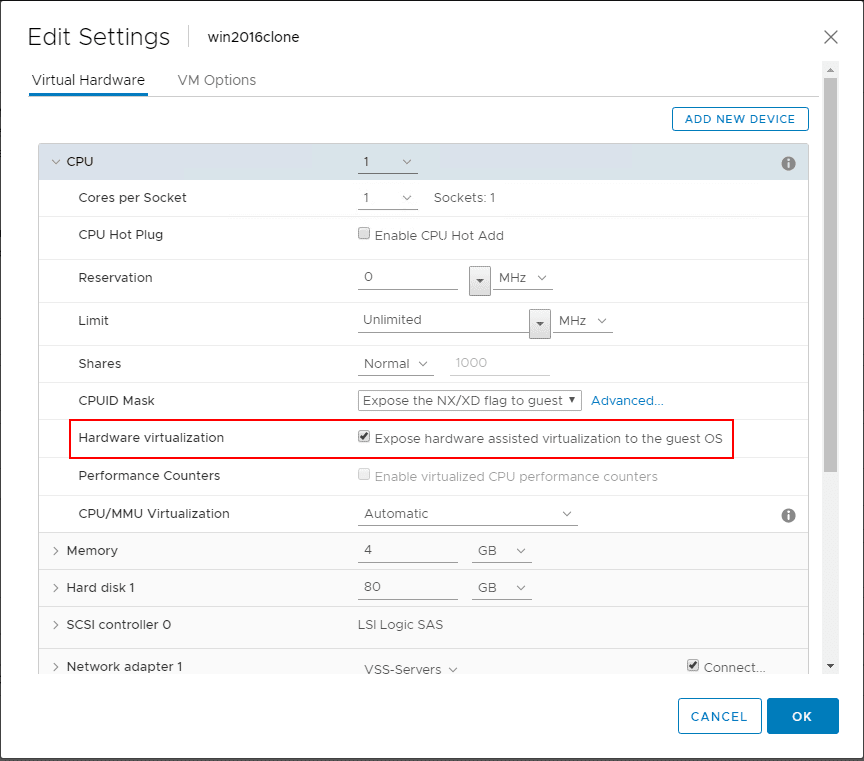

For the guest virtual machine on both platforms, it is required to expose the hardware assisted capabilities of the process directly to the guest virtual machine running on top of both platforms.

This can also be done via PowerCLI (thanks to LucD here)

$vmName = 'MyVM' $vm = Get-VM -Name $vmName $spec = New-Object VMware.Vim.VirtualMachineConfigSpec $spec.nestedHVEnabled = $true $vm.ExtensionData.ReconfigVM($spec)

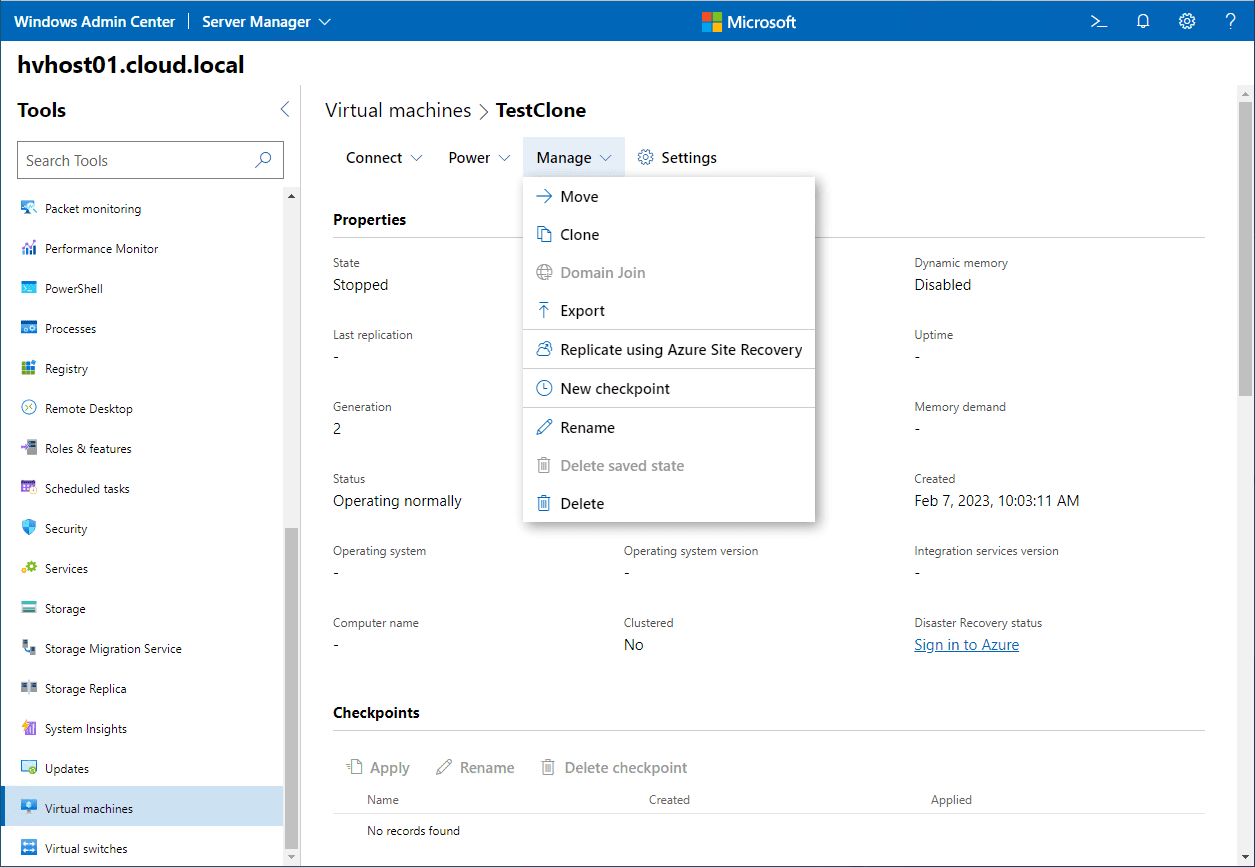

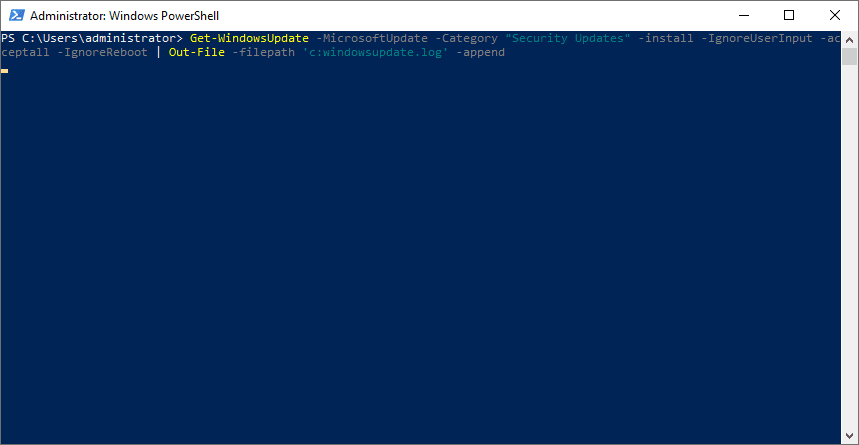

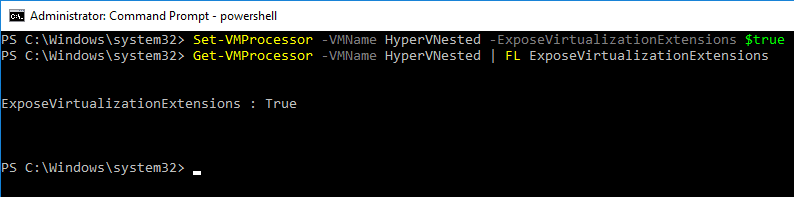

In Hyper-V, there is no way to do this from a GUI, so PowerShell makes quick work of this task with the following commandlet:

Set-VMProcessor -VMName <Hyper-V VM name> -ExposeVirtualizationExtensions $true

To check to see if the extensions are enabled in Hyper-V, you can also use PowerShell for that:

Get-VMProcessor -VMName <VMName> | FL ExposeVirtualizationExtensions

VMware vs Hyper-V Network Requirements for Nested Virtualization

Both VMware and Hyper-V have specific network requirements (with VMware depending on the version) as related to the nested virtual machine being able to communicate with the outside world. With VMware, for years VMware administrators have known about the requirement to enable promiscuous mode and forged transmits if they wanted to have nested virtual machine communicate to the production network outside of the nested ESXi host.

What is promiscuous mode? Promiscuous mode can be defined at the port group level or the virtual switch level in ESXi. Promiscuous mode allows seeing all the network traffic traversing the virtual switch. Prior to ESXi 6.7, the ESXi host virtual standard switch and vSphere Distributed Switch do not implement MAC learning like a traditional physical switch. With that being the case the virtual switch only forwards network packes to a virtual machine if the destination MAC address matches the ESXi vmnic’s pNIC MAC address. In a nested environment these destination addresses differ so will be dropped. By enabling promiscuous mode, this introduces overhead on the virtual switch.

With forged transmits, this allows the source MAC address to not match the MAC address of the ESXi server so again the packets will not be dropped.

Resources for understanding

- Why is promiscuous mode & forged transmits required for nested ESXi?

- How the VMware forged transmits security policy works

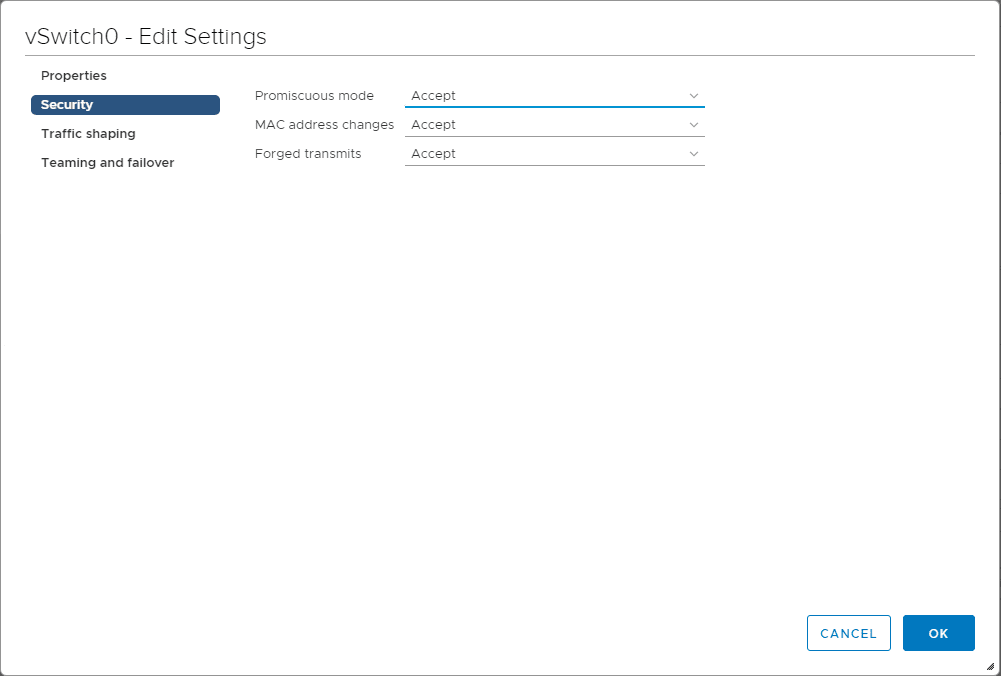

Below, looking at the properties of a Virtual Standard Switch, under Security setting promiscuous mode and forged transmits to Accept.

With VMware vSphere ESXi 6.7, VMware has implemented the work of a MAC learning Fling that has been around which adds the MAC learning functionality to the virtual switch. Promiscuous mode is no longer needed with ESXi 6.7 to run nested virtualization. See William Lam’s great write up about this new functionality below:

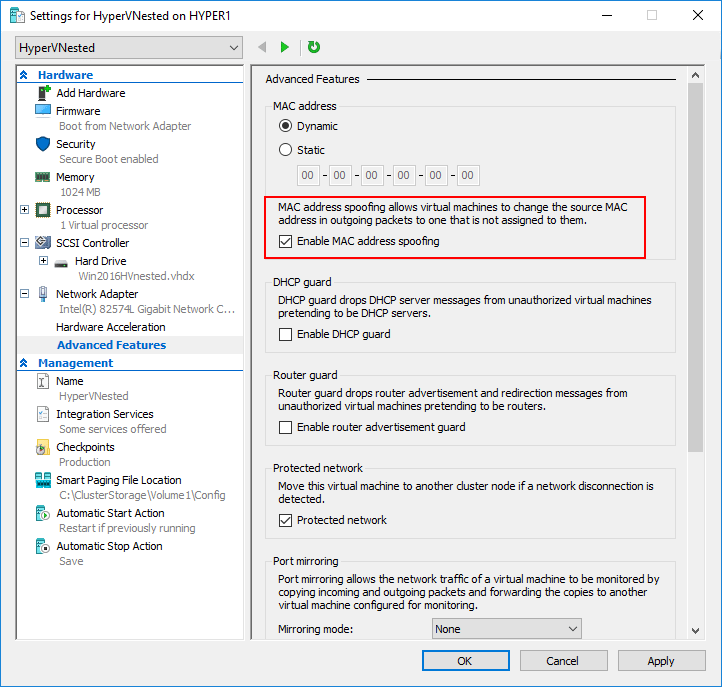

With Hyper-V, this is not enabled at the virtual switch level, but rather at the virtual machine level. Note on the properties of the virtual machine, under Network Adapter >> Advanced Features >> MAC Address spoofing this needs to be enabled. This is the same issue that VMware faced previous to ESXi 6.7 in running nested virtualization.

Additionally, with Hyper-V, running a NAT configuration for the nested Hyper-V virtual machine networking is also supported. To create the NAT configuration in Hyper-V for nested virtualization:

New-VMSwitch -Name NAT_switch -SwitchType Internal New-NetNat –Name LocalNAT –InternalIPInterfaceAddressPrefix "192.168.50.0/24"

Other Hyper-V specific requirements

- Must be running Windows Server 2016

- Only Hyper-V is supported as the “guest” hypervisor

- Dynamic memory must be disabled

- SLAT must be supported by the processor

VMware vs Hyper-V Production Use Cases

There are differences between the platforms with use cases for nested virtualization. With VMware, there is really only one officially supported use case with nested virtualization and that is with the vSAN Witness Appliance which is nothing more than a nested ESXi appliance.

Take a look at the official documentation from VMware on supported nested virtualization use cases:

With Hyper-V, the main Microsoft mentioned use case is for Hyper-V containers. This provides further isolation for containers by running them inside a VM in Hyper-V.

Takeaways

In this look of VMware vs Hyper-V Nested Virtualization, it is easily seen there are similarities and differences between VMware and Hyper-V and their implementations and use cases involving nested virtualization. Depending on the platform you have running in your enterprise datacenter, the supported functionality with nested vitualization will vary based on the platform. Both VMware and Hyper-V provide really great functionality in the respective nested solutions. VMware certainly has the more robust and fully featured nested solution, especially with vSphere 6.7 and the new networking enhancements that have been made. Microsoft has at least finally broke ground and stepped into the world of supported nested virtualization. It will be interesting to see how this develops with subsequent Hyper-V releases.

Google is updating how articles are shown. Don’t miss our leading home lab and tech content, written by humans, by setting Virtualization Howto as a preferred source.