VMware Cloud Foundation (VCF) is a powerful, automated solution to deploy the full stack of VMware solutions to deliver an SDDC in your environment. It takes the heavy lifting out of getting the infrastructure stack provisioned so that you can deliver lifecycle updates and other changes in the environment in an automated way. However, there are certain design considerations to follow and best practices that you want to note with VMware Cloud Foundation. Let’s take a look at VMware Cloud Foundation design and best practices

VMware Cloud Foundation Design and Best Practices

The first thing you want to consider is why you are deploying VMware Cloud Foundation. What are you trying to achieve? There is a key design departure with VCF in comparison to traditional vSphere environments and a tradeoff that comes into play.

You are essentially giving up customization for the sake of automation when you choose to make use of VCF. Many are used to tuning or tweaking ESXi or vSphere in snowflake environments to squeeze more performance or other advantages out of the system. However, the beauty of VCF lies in its consistency and automation. It it critical that it is deployed in a way that is in accord with best practices.

Since the name of the game with VMware Cloud Foundation is consistency you can achieve the following:

- Automation

- Stability

- Scale

- Speed

Another key differentiator with VCF is that you are choosing it to run services and offer those services to your customers or end users. These services might include the following:

- Let them provision VMs

- Let them provision loadbalancers

- Let them provision other things

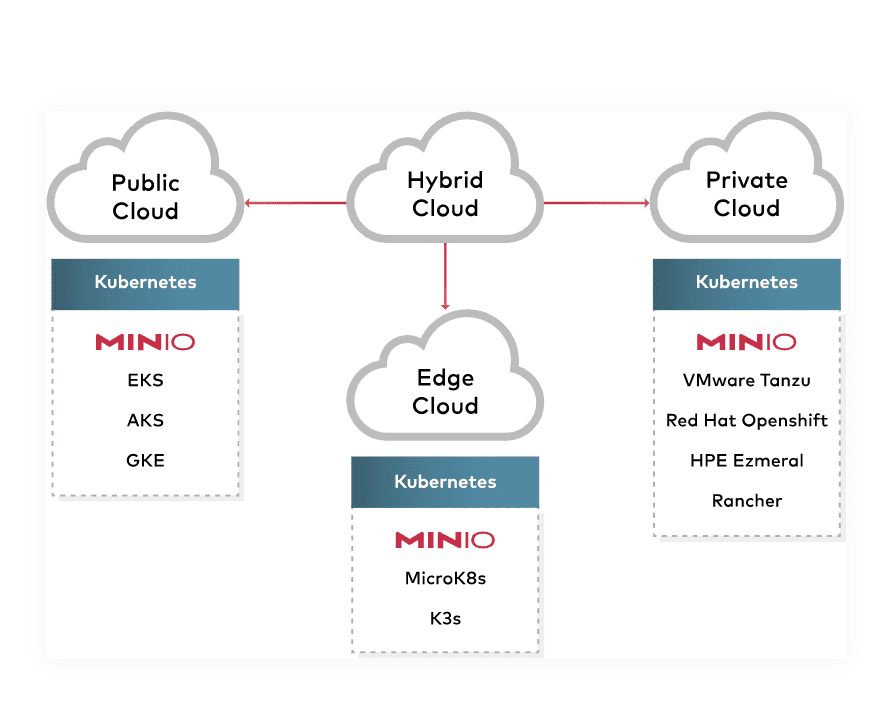

Other important questions include which services you want to deploy, who have access, where are they deployed, and what about availability zones? Different datacenters? Cloud extensions?

Designing your VCF installation based on services first is the smart way to begin building your VCF infrastructure and this helps to alleviate problems later when these questions were not asked initially.

Physical Hardware for VCF

Let’s look at physical hardware considerations. When you are building out VCF, do consider the following:

- Use vSAN ready nodes and don’t try to squeeze the last bit of speed out of the configuration using customized hardware

- Use all flash when possible

- Speed and reliability is there with flash

- Use enterprise SD flash, don’t use part of your flash for this

Think about how you want to lay out your clusters. Think about how many failures can you tolerate. Not just of a host, but of a cluster. vSAN is the core of everything in VCF, so you have to apply the rules of failures in vSAN and vSphere hosts.

How do you want to do DR with the design? You want to think about these things on how you can survive a failure.

A great design consideration and principle to go by when determining the number of hosts you have in your VCF cluster:

Good rule of thumb

- Design your hosts so that you can have a host in maintenance mode and also have a failed host. Design for this scenario.

Physical considerations – Fault domains.

Design your fault domains accordingly and appropriately to the number of racks you have and accounting for failures of an entire rack.

Each rack in a datacenter tends to have similar fault points:

- shared powered, shared networking

You will have a rack failure at some point, so design for this possibility

- More, smaller nodes may provide better building blocks for availability

Do not accept “two switches will never fail at the same time” theory as it can and WILL happen at some point.

- A common firmware bug can take down

Pay specific attention to your management domain where many of your services start and execute from (vRA)

- Service availability often counts towards total uptime

Do not assume a 2 rack design protects vSAN.

- vSAN does not respect 2 racks as fault domains – it will not distribute workloads across racks as it has no visibility into this

- Consider a stretched cluster configuration for vSAN if only 2 racks are possible

- Your witness VM must be OUTSIDE your VCF installation

vSAN planning guide – Bible for understanding how you can tolerate failures.

Read the principles and points found in the vSAN Planning and Deployment Guide.

Availability zones

- Each AZ should operate independently as just a bunch of resources

- Remove interdependencies whenever possible

- Treat each VCF deployment as an AZ

–All workload domains share common management with the management domain - Standardize your design/layouts

- Standardize your design/layouts

–Single design for all data centers - single design for ROBO’s (as appropriate)

Environments

- Plan to have an infrastructure playground VCF environment

- Test how upgrades work in the environment

- Integrate new hardware to existing configurations

- Should not be used by anyone but the VCF Engineering team

- Test automated deployment code

Networking

Use the modern leaf & spine networking designs:

Minimum of 2X Top of Rack Switches (TOR)

- 25 Gpbs + highly recommended

- Support capabilities such as ACL, DHCP relay

Jumbo frames, jumbo frames, jumbo frames

DHCP is required for NSX – No MAC reservations

- There is no way of knowing what the VTEP MAC will be until its created by the deployment automation

Separate cluster vSAN and vMotion networks (prioritize to different adapters)

Size TCP/IP networks based on expected maximum growth

- Don’t underestimate how rapidly VCF will scale!

Always setup vMotion to be L3 routable

- Most likely there may be a future reason that you need to evacuate a cluster or move workloads. Have this configured so that you have it ready, tested, and available when you need it

- Does not need to route to tenant space

Workload Mobility

Design for VM mobility between racks within Availability Zone

Assume VM mobility between workload domains

- In case one ever needs to be rebuilt

Assume VM mobility between availability zones

- Proactive disaster avoidance

- Replacement of hardware

Design for this on day 1, even if you do not initially offer it as a service

- This is very complicated to change after initially deployed and configured

Authentication and Authorization

Authorization

- 2 factor is not currently supported via VCF

- Creates local accounts in local registries SDDC manager uses dual factor authentication for its API

Identity Management

- vSphere 7 is moving away from IWA

- Design for identity federation

- Consider whether you REALLY need ESXI integration with AD

Credential Storage (External Vault)

- SDDC manager provides APIs’s for updating and rotating passwords. SDDC manager is the center of truth for accounts

- Create automation to use the APIs for your vault

- Vault must engage a password rotation on SDDC Manager

- Always perform the rotation fro the external vault

- If you own CyberArk, install the VCF plug-in from CyberArk

Designing for Scale

Bare Metal Edges

- Use when you need high packets per second, not high throughput

- Build a PXE based imaging solution

- Use a policy-based solution with an automatable API for the configuration of hardware

Design from the ground up to deploy with automation using standarized tooling

- Standarization is key to a smoothly running and EXPANDING solution

- Use Pipelining to control individual deployments and configurations

- Orchestration/automation shouldn’t be artificially constrained to one language/tool

–Use what works best for what you’re deploying

–Don’t choose between Python and PowerCLI – use both - Recognize this means your FIRST solution will take significantly longer

VCF is Infrastructure as Code through it’s JSON configurations

- Keep the ones you deploy, use them to deploy others going forward

Backup and Disaster Recovery

These are both different things. Backup recovers corruption the data. Disaster recovery recovers the loss of data.

Backup

- You NEED to backup critical components

–vCenter, SDDC Manager, NSX - Use their file based external capabilities EVEN if you are using a full backup-solution

- Backup to HA sources OUTSIDE of VCF

Disaster Recovery

- DR is not part of the VCF solution

- If DR of management is required, place vRealize services on stretched VLAN/VxLAN between VCF instances

- Standard DR designs work just fine. These do not hinder VCF

Getting Ready for install

Putting VMware Cloud Foundation Design and Best Practices into practice. Remember the golden rule with VCF – No tinkering with VCF conventions

- All settings configured by SDDC Manager should not be modified

- Day 2 ops will fail!

APIs are your friend

- Buildout your VCF from automation

- The JSON templates have specific layouts so do not modify it

- Use the samples suggested on code.vmware.com

Naming is critical

- Figure it out early and consider it permanent

- Any convention changes required complete rebuild of hosts, clusters, workload domains, or the entire VCF instance

Cloud Builder VM

- Should be a temporary appliance for bring-up and destroyed once complete

- Don’t try to productionize the appliance putting it in your CMDB, etc

- Not meant for production, temporary appliance. You can and should remove it. This appliance is only meant to be around for a couple of hours

Great VCF presentation at VMworld

Most of the information provided above is found in a really great VMworld 2020 presentation:

- Become a Superhero Architect of VMware Cloud Foundation 4 [HCP1978]

Wrapping Up

If you are looking at deploying a fully automated SDDC stack, VCF is the tool to get this done and it provides great benefits for Day 2 operations. When looking at VMware Cloud Foundation Design and Best Practices, there are many different design and best practices decisions to make when it comes to VCF and hopefully the above notes will help any who are looking at deploying VCF the right way and without any gotchas down the road.

Google is updating how articles are shown. Don’t miss our leading home lab and tech content, written by humans, by setting Virtualization Howto as a preferred source.