Best Docker Containers Commands You Need to Know

Understanding the basics when working with Docker is important. Let’s look at the best Docker containers commands you need to know to master working with Docker.

Docker in the home lab

I run Docker containers in the home lab extensively as it allows one of the easiest ways to spin up new services and run solutions in the home lab. With Docker, you don’t have to worry about installing all the underlying prerequisites and technologies. These are contained in the image itself.

Docker doesn’t replace virtual machines entirely. Rather it allows you to run a much more efficient data center since you can use VMs for container hosts, then use containers to run your applications. Instead of having a separate virtual machine for each application as we used to configure.

Docker Images and Docker Containers

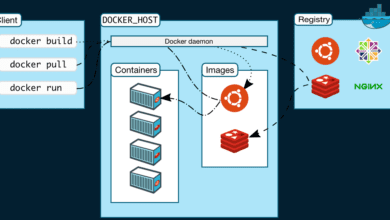

Understanding Docker begins with understanding two key concepts, these are Docker images and Docker containers. A Docker image, is a read-only template, that gives Docker the instructions needed to create a new Docker container. A Docker container is a running instance of an image and it stores all the necessary information and components to run an application.

Docker images find their home in a Docker registry like Docker Hub. This service functions as a warehouse for pre-built images for web apps like Nginx, Apache, Memcached (a distributed memory caching system) and as a platform for users to share their custom Docker images.

Docker containers are the constructs where Docker images are run on a Docker container host, using the Docker runtime.

As developers continually strive for optimization, “best Docker images” and “best Docker containers” often crop up in discussions, highlighting the demand for high-quality, performance-oriented Docker resources.

Docker Images: The Building Blocks for Successful Docker Projects

Developers can generate Docker images using a Dockerfile, a textual document containing commands for building an image. Upon preparing the Dockerfile, the docker build command comes into play, creating the Docker image.

Below is a simple example of a Dockerfile used to build an Ubuntu 22.04 container:

FROM ubuntu:22.04

ENV DEBIAN_FRONTEND=noninteractive

RUN apt-get update

ENTRYPOINT bashA Docker image can package all required to run applications – code, a runtime, libraries, environment variables, and config files. This compact, all-inclusive structure makes Docker a perfect fit for Continuous Integration, offering a consistent and replicable software development environment.

Docker Compose: Orchestrating Multiple Containers Seamlessly

Docker Compose makes complex applications more manageable by treating multiple containers as a single service.

Moreover, Docker Compose’s built-in support for managing networks and volumes offers greater flexibility to developers, fostering efficient deployment of multi-service applications. This powerful functionality solidifies Docker Compose’s position as a vital tool for developers and system administrators managing multiple containers.

Below is an example of a Docker compose file:

services:

traefik2:

image: traefik:latest

restart: always

command:

- "--log.level=DEBUG"

- "--api.insecure=true"

- "--providers.docker=true"

- "--providers.docker.exposedbydefault=true"

- "--entrypoints.web.address=:80"

- "--entrypoints.websecure.address=:443"

- "--entrypoints.web.http.redirections.entryPoint.to=websecure"

- "--entrypoints.web.http.redirections.entryPoint.scheme=https"

ports:

- 80:80

- 443:443

networks:

- traefik

volumes:

- /var/run/docker.sock:/var/run/docker.sock

container_name: traefik

pihole:

image: pihole/pihole:latest

container_name: pihole

ports:

- "53:53/tcp"

- "53:53/udp"

dns:

- 127.0.0.1

- 1.1.1.1

environment:

TZ: 'America/Chicago'

WEBPASSWORD: 'password'

PIHOLE_DNS_: 1.1.1.1;9.9.9.9

DNSSEC: 'false'

VIRTUAL_HOST: piholetest.cloud.local # Same as port traefik config

WEBTHEME: default-dark

PIHOLE_DOMAIN: lan

volumes:

- '~/pihole/pihole:/etc/pihole/'

- '~/pihole/dnsmasq.d:/etc/dnsmasq.d/'

restart: always

networks:

- traefik

labels:

- traefik.enable=true

- traefik.http.routers.pihole.rule=Host(`piholetest.cloud.local`)

- traefik.http.routers.pihole.tls=true

- traefik.http.routers.pihole.entrypoints=websecure

- traefik.http.services.pihole.loadbalancer.server.port=80

networks:

traefik:

driver: bridge

name: traefik

ipam:

driver: defaultBest Docker Commands to know

The process to manage Docker containers and container images in your environment can easily be done with the following 10 commands. While there are open source user interface solutions you can use, knowing the Docker command line is definitely beneficial.

docker pull: Fetches Docker images (docker apps) from Docker Hub or other Docker registries.

docker run: Creates a new container from an image and initiates it.

docker stop: Halts a running container.

docker start: Powers up a halted container.

docker restart: Resets a running or halted container.

docker rm: Deletes a Docker container.

docker logs: Retrieves the logs of a Docker container.

docker stats: Provides live data about running containers.

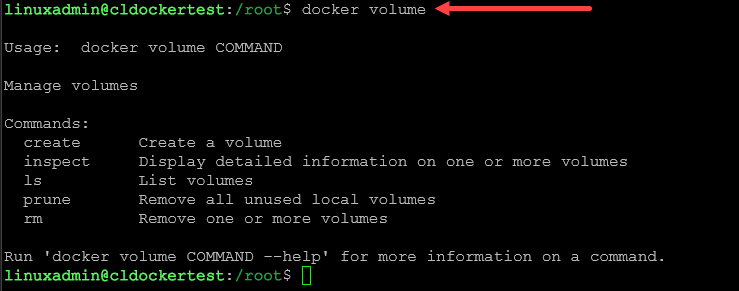

docker volume: Manage containers volumes associated with containers.

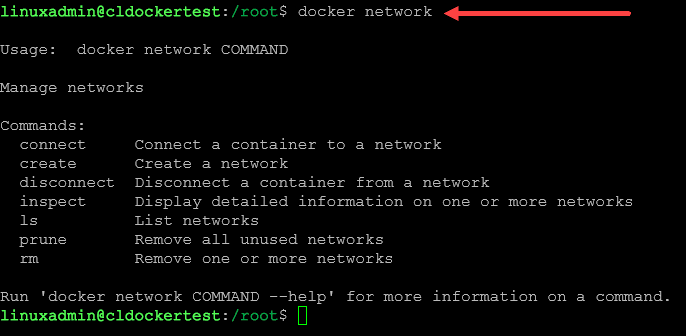

docker network: Handles networking aspects of Docker containers.

These commands are integral to managing Docker containers, whether you are using Docker containers in the home lab or in the enterprise. Even if you use the best Docker apps, you will undoubtedly need to manage the container and image using the following commands.

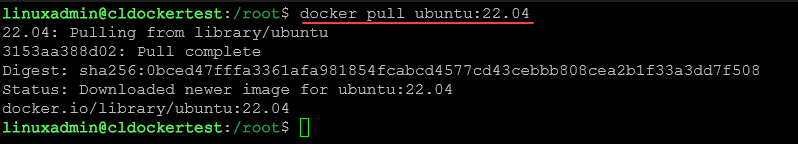

1. Docker Pull

The docker pull command fetches Docker images from Docker Hub or other Docker registries. It uses the base image to create the container.

docker pull ubuntu:22.04This command downloads the Ubuntu 22.04 image.

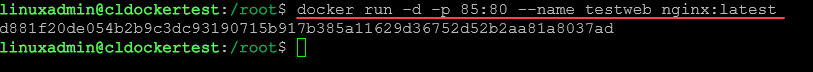

2. Docker Run

The docker run command creates and runs a new container from an image.

docker run -d -p 80:80 --name <name your container> nginx:latestThis command starts an Nginx server container named “my_server” in detached mode. Also, be sure to adjust the external port if needed to avoid conflicts.

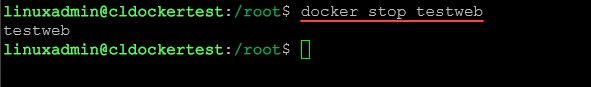

3. Docker Stop

The docker stop command halts a running container.

docker stop <your container name>This command stops the “my_server” container.

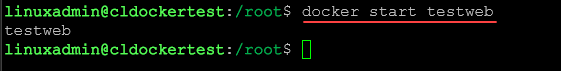

4. Docker Start

The docker start command powers up a halted container.

docker start <your container name>This command restarts the “my_server” container.

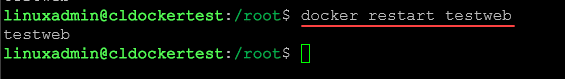

5. Docker Restart

The docker restart command resets a container.

docker restart <your container name>This command restarts the “my_server” container.

6. Docker Rm

The docker rm command removes a Docker container.

docker rm <your container name>This command removes the “my_server” container.

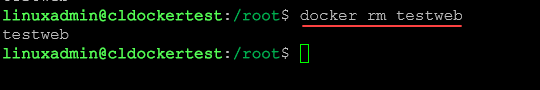

7. Docker Logs

The docker logs command fetches the logs of a Docker container.

docker logs <your container name>This command retrieves logs of the “my_server” container.

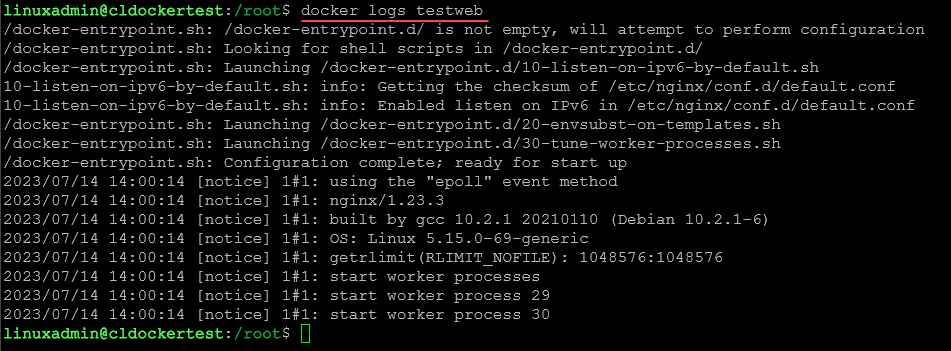

8. Docker Stats

The docker stats command provides live data about running containers.

docker stats <your container name>This command shows live data stream for “my_server” container.

9. Docker Volume

The docker volume command manages the volumes tied to Docker containers.

docker volume create <volume name>This command creates a new volume named “my_volume.”

10. Docker Network

The docker network command handles networking aspects of Docker containers.

docker network create <network name>This command creates a new network named “my_network.”

Best Docker containers for your Synology

Wrapping up

There are many great Docker commands that can help you run Docker containers, configure them, and do a better job in troubleshooting when things go wrong. Hopefully this roundup of Docker container commands will help you get started with a few commands you may not have known.

How to list the docker image and instance, definitively ?

Atul,

Hey you can list your Docker images using “docker image ls” and then also see your running containers with “docker ps”. If you want to see all containers, even those stopped, you can do “docker ps -a”

Brandon