Are you making the transition to Kubernetes in your learning path? If you haven’t taken the plunge as of yet, there are many great learning tools available for learning Kubernetes and spinning up a K8s cluster quickly and easily. I want to detail a set of tools today that use three technologies that make spinning up a Kubernetes cluster extremely easy. We will look at installing K3s on Ubuntu with K3D in Docker.

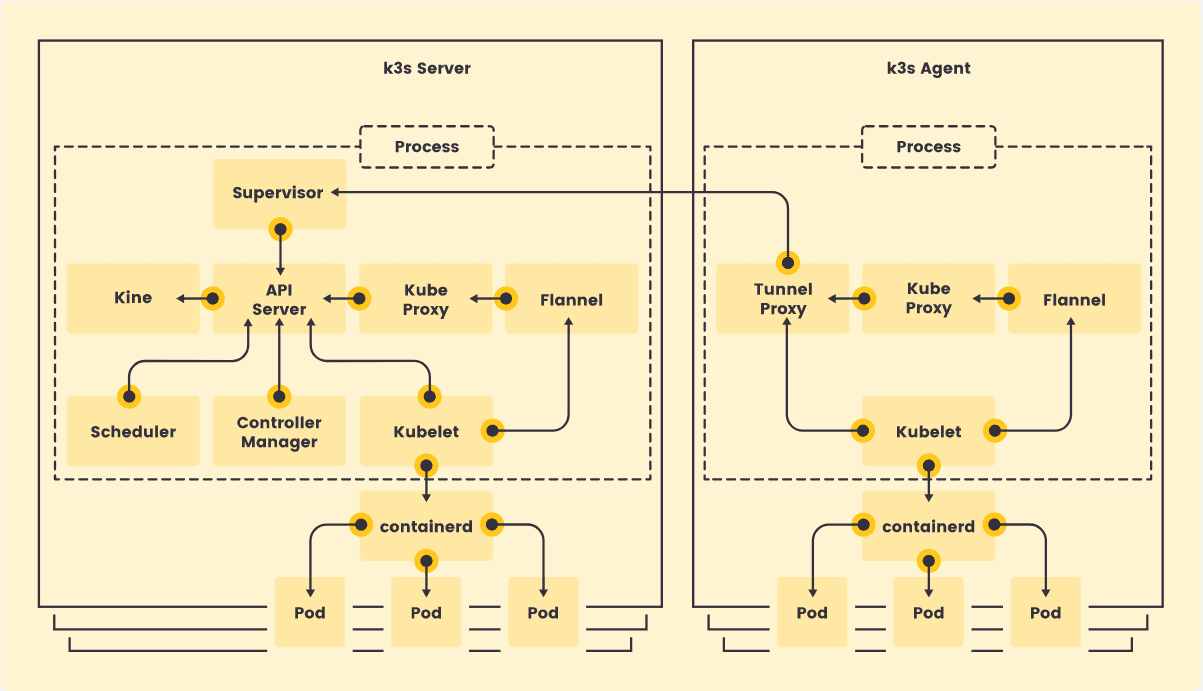

What is K3s?

K3s is a lightweight Kubernetes distribution that is built for IoT & Edge computing environments. It is highly available and meant for production workloads. K3s is packaged as a single binary less than 50 MB, reducing the dependencies and steps needed to install and run Kubernetes. You can also run it on a Raspberry Pi cluster as ARM4, and ARMv7 architectures are supported.

You can learn more about K3s at the official site here:

What is K3D?

The K3D utility is a lightweight tool that allows running K3s inside Docker containers. You can create a single and multi-node cluster using the K3s distribution in Docker for local development, learning, labbing, PoCs, etc.

It is free and open-source and is not an official Rancher solution. You can learn more about K3D from the official site here:

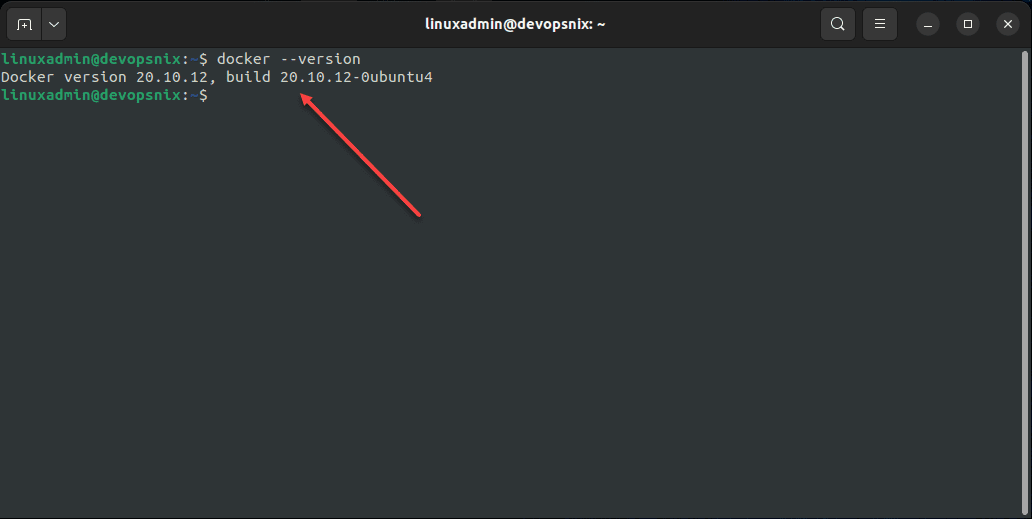

Ubuntu 22.04 and Docker

For my little K3s and K3D lab, I am using a fresh Ubuntu 22.04 LTS workstation running Docker. To install Docker in Ubuntu 22.04 LTS workstation, I simply followed the official Docker documentation found here:

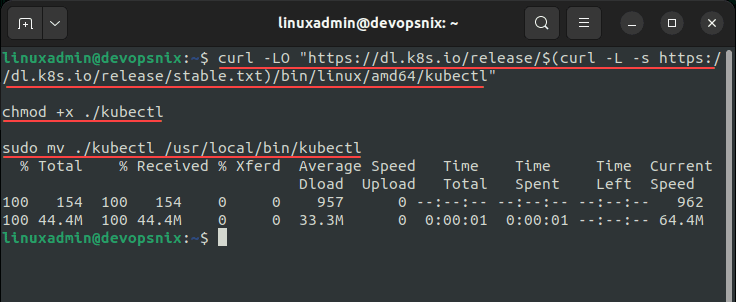

Installing kubectl

One of the first utilities we want to get installed in Ubuntu 22.04 LTS is the kubectl utility. The kubectl utility is the defacto standard for interacting with Kubernetes. To install kubectl in Linux, run the following three commands:

curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl"

chmod +x ./kubectl

sudo mv ./kubectl /usr/local/bin/kubectlNow, let’s proceed on to installing K3D.

Installing K3D

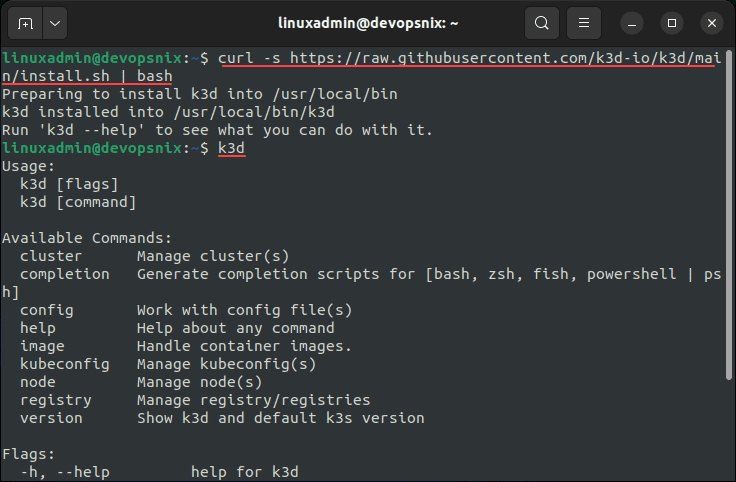

The process of installing K3D is straightforward and is a simple one-liner. Use the following to install the K3D utility:

curl -s https://raw.githubusercontent.com/k3d-io/k3d/main/install.sh | bashOnce you install the K3D utility, you can make sure it is installed and running by using the k3d command like so:

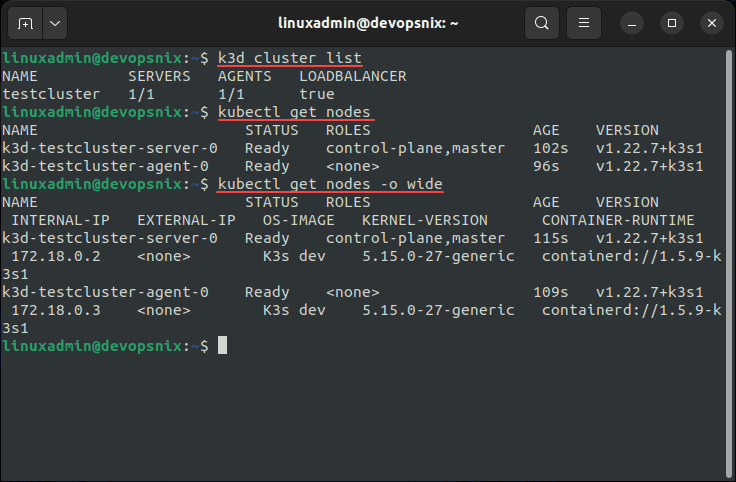

k3dCreating the K3s cluster

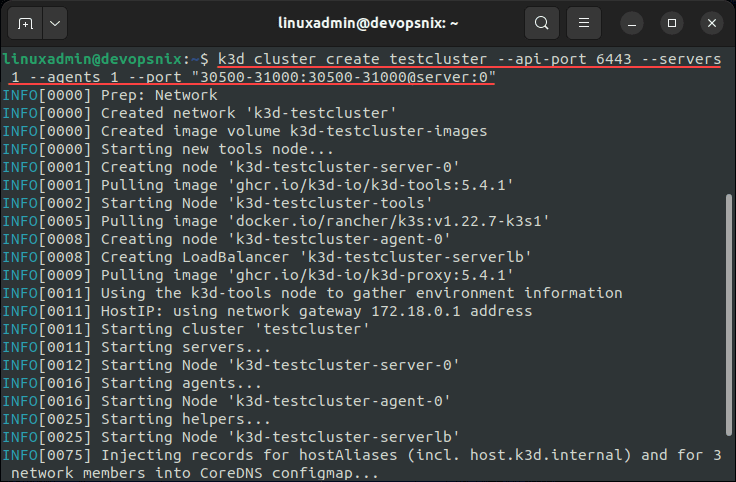

Creating a K3s cluster with the K3D command is a simple one-liner. To create the cluster, use the following command. In the below:

- The k3d command is creating a cluster name called testcluster

- The API port is set to 6443

- In K3D/K3s, the server equates to the control node

- In K3D/K3s, the agent equates to the worker node

- The port command is telling it to expose the services on NodePorts. We are exposing the ports 30500-31000 to be used. As a note, I am not exposing all of the node ports because I ran into an issue where when I exposed all, it crashed on bringing the cluster up. So, I lowered the number of NodePorts back to 500.

k3d cluster create testcluster --api-port 6443 --servers 1 --agents 1 --port "30500-31000:30500-31000@server:0"Once the cluster is created, we can start interacting with the cluster using either the K3D utility or kubectl.

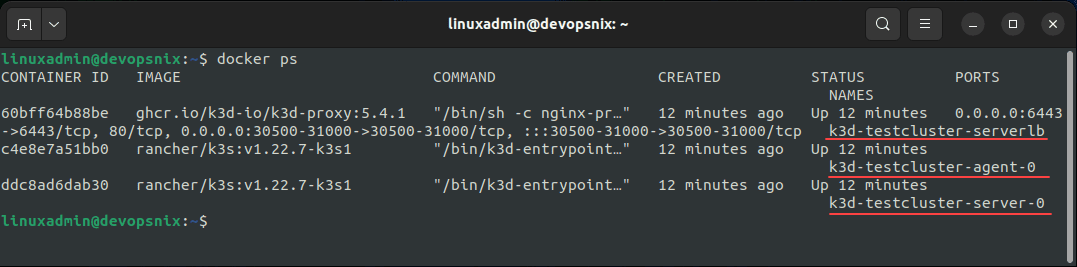

The really cool thing to see is running a docker ps and seeing the Kubernetes nodes running as Docker containers along with the load balancer! Cool stuff.

Install Portainer in the K3s Kubernetes Cluster

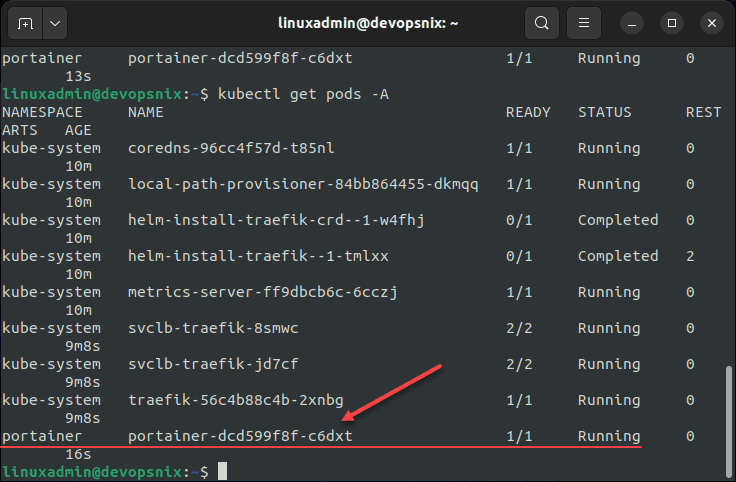

Now to actually test a workload running in the K3s Kubernetes cluster. For this, I am choosing Portainer. Here, I am simply copying the command found in the documentation on how to Install Portainer with Kubernetes on your Self-Managed Infrastructure.

The command to install Portainer in Kubernetes using the NodePort method to expose the service is as follows:

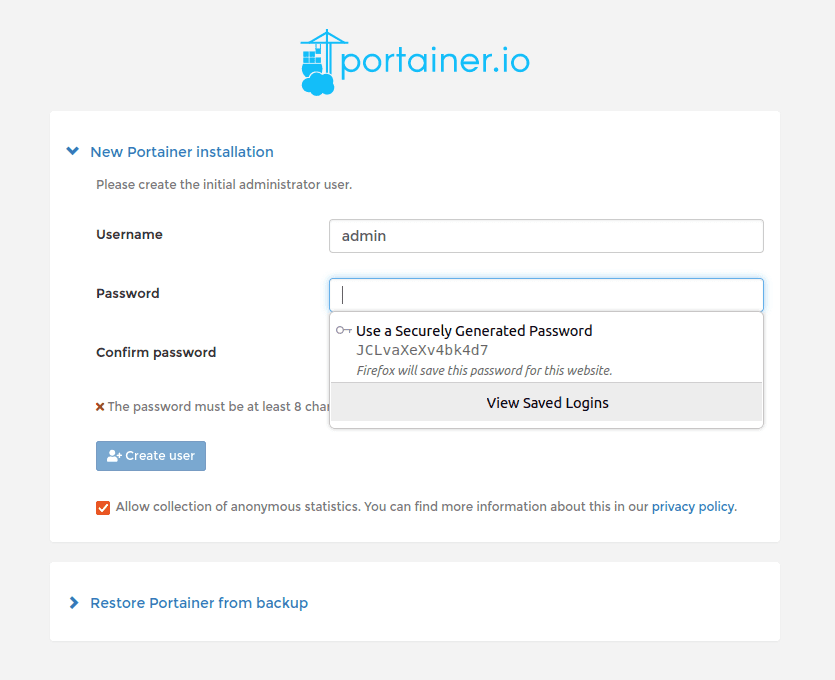

kubectl apply -n portainer -f https://raw.githubusercontent.com/portainer/k8s/master/deploy/manifests/portainer/portainer.yamlAfter the pod comes to a running state, we can navigate to the NodePorts for the Portainer container at either HTTP (30777) or HTTPS (30779). You will be greeted with the Portainer initial configuration screen.

Video overview showing how to install K3s using K3D in Ubuntu Docker Containers

K3s and K3D FAQs

- What is K3s? The K3s Kubernetes distribution from Rancher is an extremely lightweight Kubernetes distribution that allows running Kubernetes on IoT devices, even Raspberry Pi devices as it can run on ARM architecture as well. It is a great distribution of Kubernetes that in addition to making a great platform for production environments, also serves as a great learning environment when used with Docker containers and K3D.

- What is K3D? K3D is a lightweight wrapper to run K3s (Rancher Lab’s minimal Kubernetes distribution) in Docker. You can create single and multi-node clusters with K3D. It is community supported and is free to download and use.

- Can you run Kubernetes in containers? Yes. As shown in the post, you can use K3D with K3s to run Kubernetes nodes inside containers. Also, you can check out my post here showing how you can use LXC containers to run Kubernetes nodes on a Linux host as well.

- Can you install K3s on Ubuntu? Yes, using many methods, you can use Ubuntu to host Kubernetes as the Kubernetes node itself, LXC containers running Kubernetes nodes, or using something like K3D to run K3s inside Docker containers.

Wrapping Up

Hopefully this post will provide a tutorial of how using just a single Ubuntu workstation or server running in a VM, you can effectively install K3s on Ubuntu inside Docker containers. This takes only a few commands and is a great way to spin up and tear down Kubernetes clusters for a lab, PoC, or development environments.

Google is updating how articles are shown. Don’t miss our leading home lab and tech content, written by humans, by setting Virtualization Howto as a preferred source.