In the previous post, we took a look at Storage Replica in Windows Server 2019 Features and Configuration. The new features and configuration that are included with the Windows Server 2019 version of Storage Replica make it an even better solution for the high-availability of your data. We looked at configuring the Storage Replica solution in Windows Server 2019 including the process of installing the feature and setting up replication. In this post, we will take a quick look at the Windows Server 2019 Storage Replica failover process and how this is achieved both via Windows Admin Center and in PowerShell.

Windows Server 2019 Storage Replica Failover Process

In the lab environment, I have a couple of Windows Server 2019 Standard Edition servers configured to test the Storage Replica feature.

- WIN2019SR01

- WIN2019SR02

In the lab environment, I have Win2019SR01 configured as the source. Then, Win2019SR02 is configured as the destination for the Storage Replica.

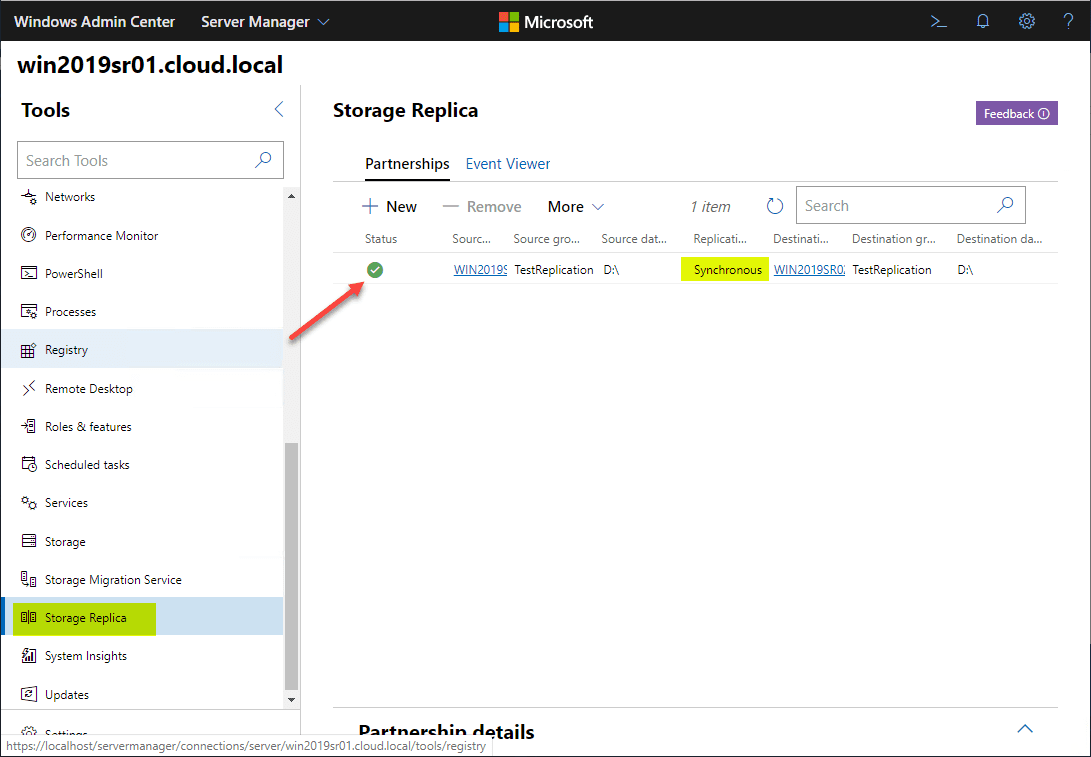

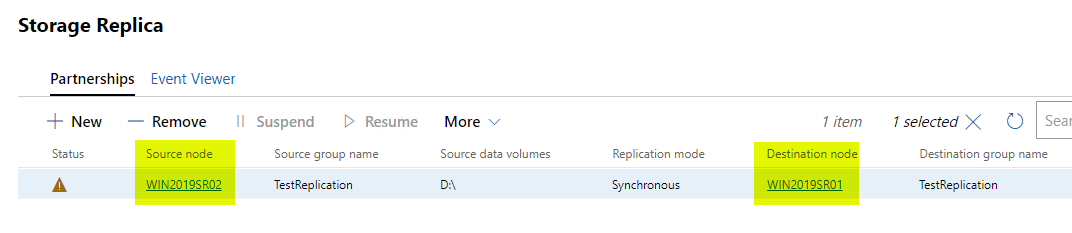

Below, you see I am managing Windows Server 2019 Storage Replica in the Windows Admin Center under the Storage Replica menu.

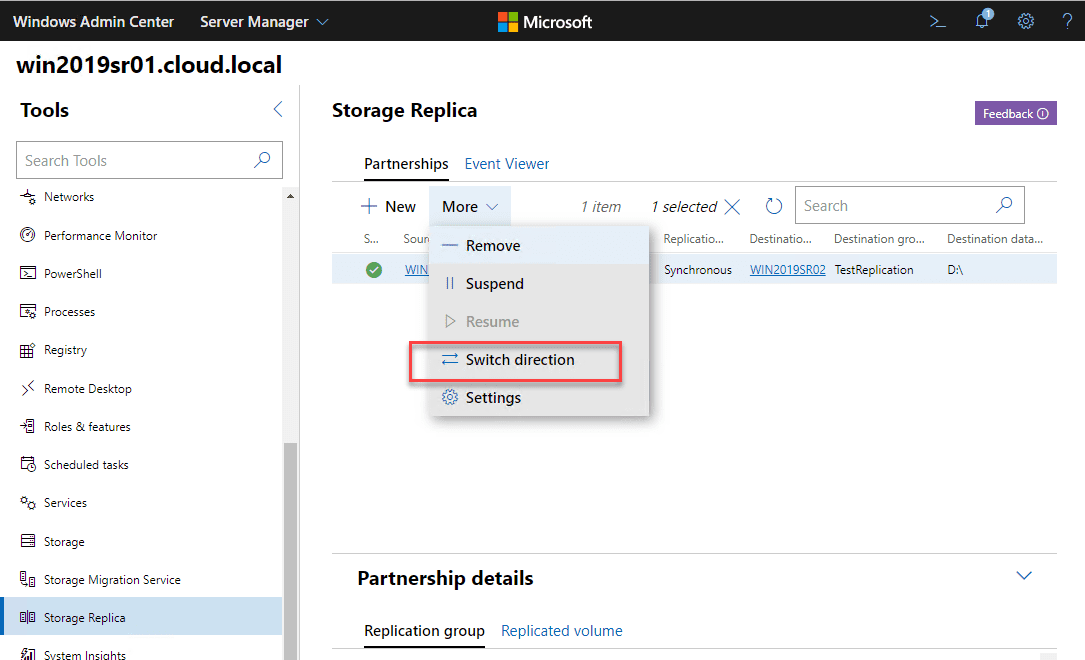

Failing over in Windows Server 2019 Storage Replica is super simple. It simply involves the Switch direction function found under the More menu for Storage Replica in Windows Admin Center.

Simulating Storage Replica Failure

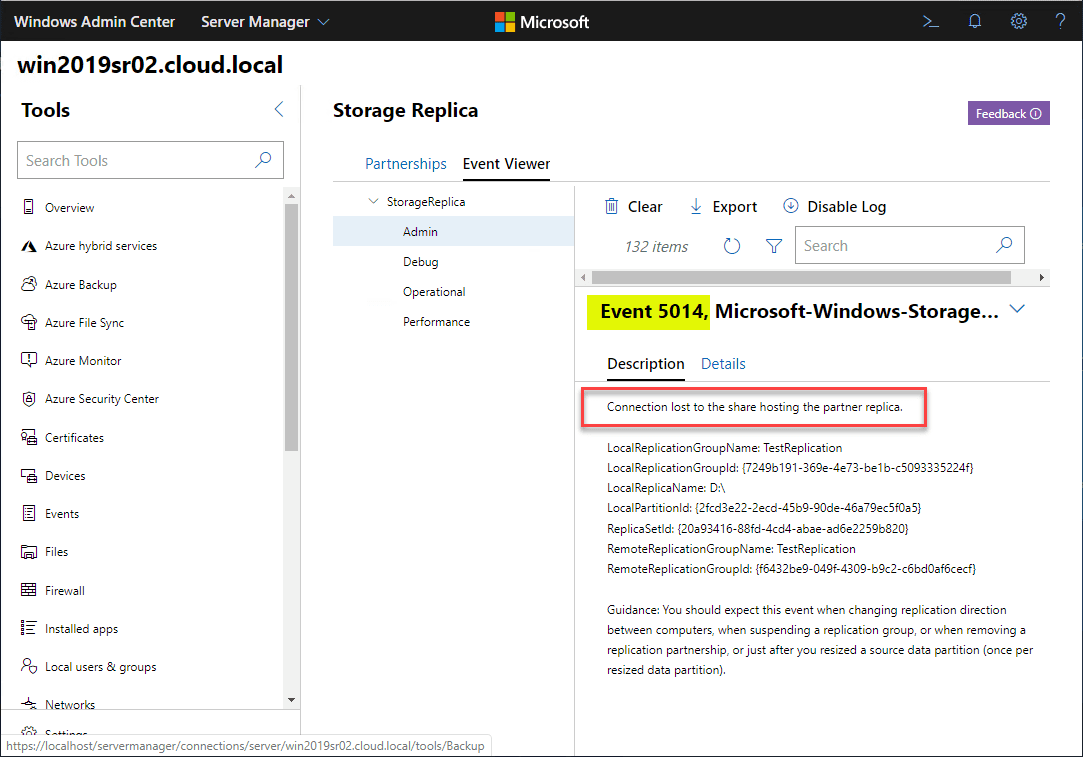

In the lab scenario outlined above, to simulate a failure, I simply shutdown the Win2019SR01 Windows Server 2019 server. In Windows Admin Center on the Win2019SR02 server, I refreshed the Storage Replica dashboard and start seeing errors as expected.

The first error seen is the Event 5014.

Connection lost to the share hosting the partner replica. LocalReplicationGroupName: TestReplication LocalReplicationGroupId: {7249b191-369e-4e73-be1b-c5093335224f} LocalReplicaName: D: LocalPartitionId: {2fcd3e22-2ecd-45b9-90de-46a79ec5f0a5} ReplicaSetId: {20a93416-88fd-4cd4-abae-ad6e2259b820} RemoteReplicationGroupName: TestReplication RemoteReplicationGroupId: {f6432be9-049f-4309-b9c2-c6bd0af6cecf}

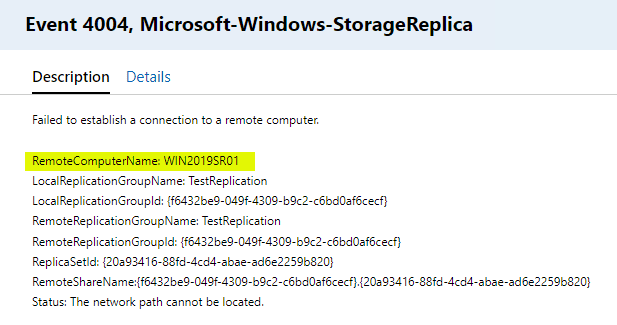

Additional warnings come through the Windows Admin Center console.

Failed to establish a connection to a remote computer. RemoteComputerName: WIN2019SR01 LocalReplicationGroupName: TestReplication LocalReplicationGroupId: {f6432be9-049f-4309-b9c2-c6bd0af6cecf} RemoteReplicationGroupName: TestReplication RemoteReplicationGroupId: {f6432be9-049f-4309-b9c2-c6bd0af6cecf} ReplicaSetId: {20a93416-88fd-4cd4-abae-ad6e2259b820} RemoteShareName:{f6432be9-049f-4309-b9c2-c6bd0af6cecf}.{20a93416-88fd-4cd4-abae-ad6e2259b820} Status: {Network Name Not Found} The specified share name cannot be found on the remote server.

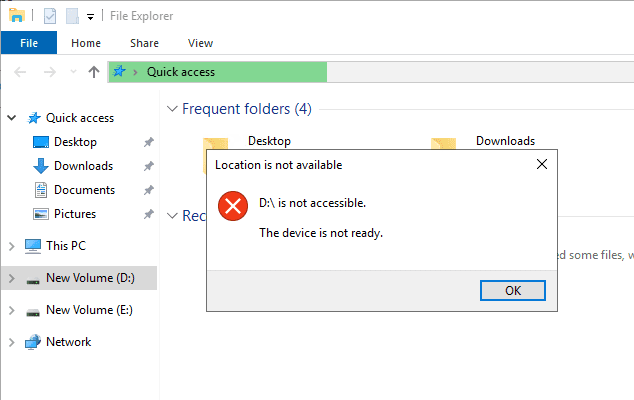

However, one thing I noticed in testing Storage Replica is the state of the primary Storage Replica server did not change the fact that the destination Storage Replica server volume is still in an inaccessible state.

I did notice that I saw an Event 5005 which stated that it entered a stand-by state:

Destination entered stand-by state. ReplicationGroupName: TestReplication ReplicationGroupId: {7249b191-369e-4e73-be1b-c5093335224f} ReplicaName: D: ReplicaId: {20a93416-88fd-4cd4-abae-ad6e2259b820}

When trying to access the D: drive it is still showing inaccessible.

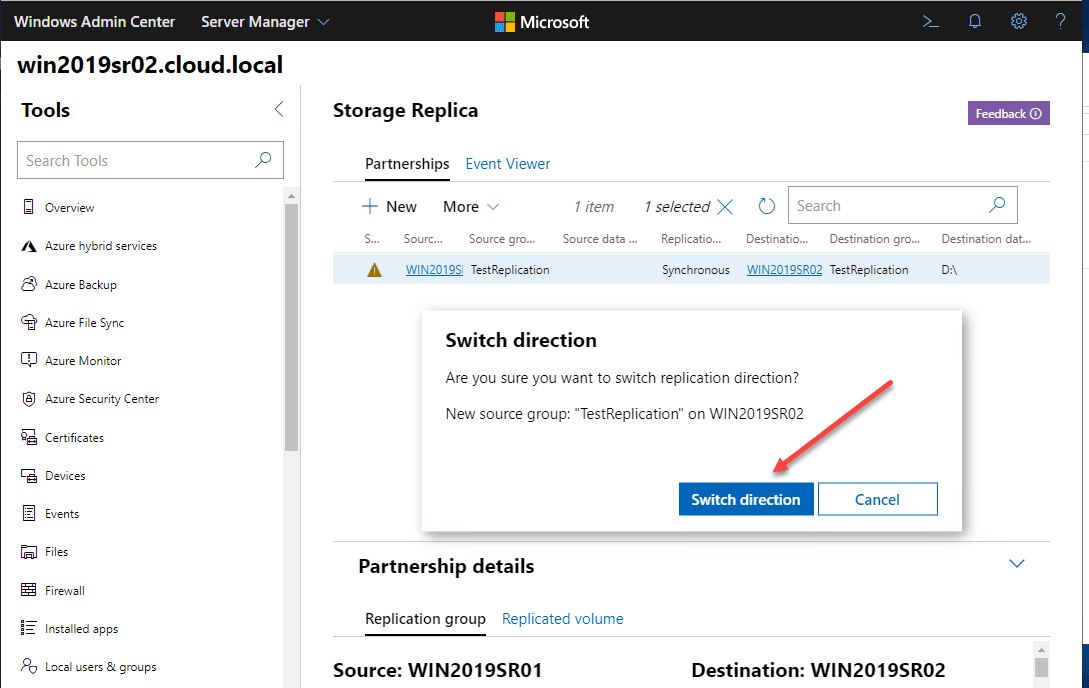

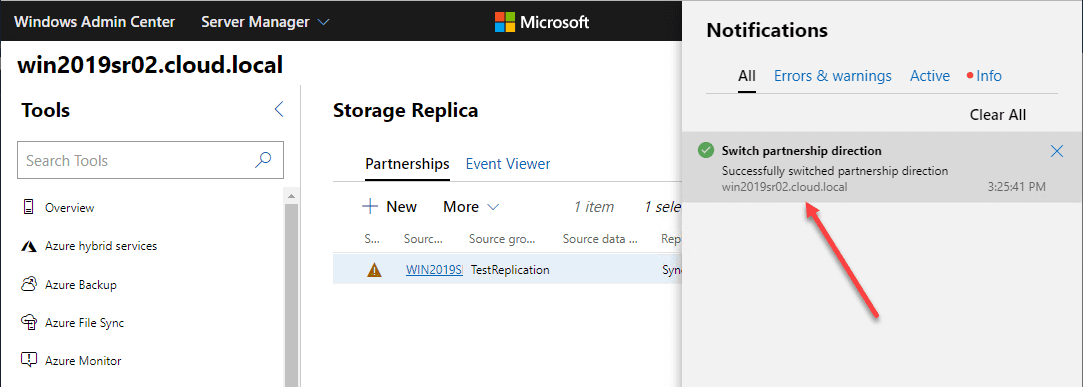

On the Win2019SR02 server, we simply need to Switch direction.

The Switch partnership direction task completed successfully.

The Source node and the Destination node have now switched directions. We still see the “bang” on the partnership which is to be expected since the other server is still down.

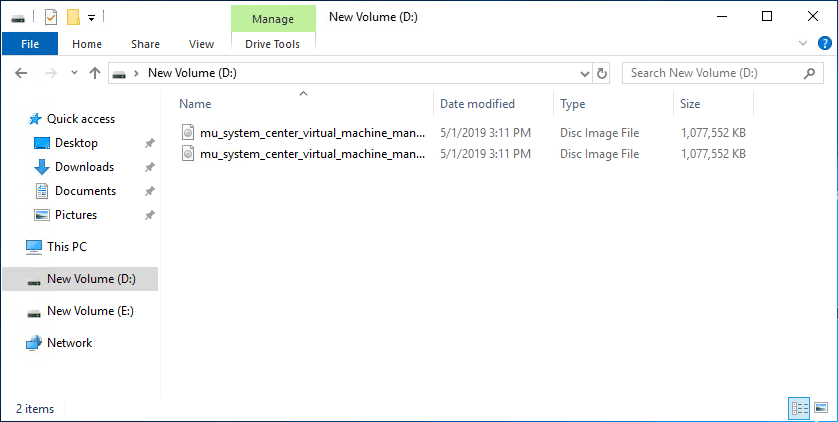

I had copied a couple of ISO files to the volume to have something to test Storage Replica synchronization with. Now, after switching the direction, we can now access the volume on the remaining Storage Replica node, Win2019SR02.

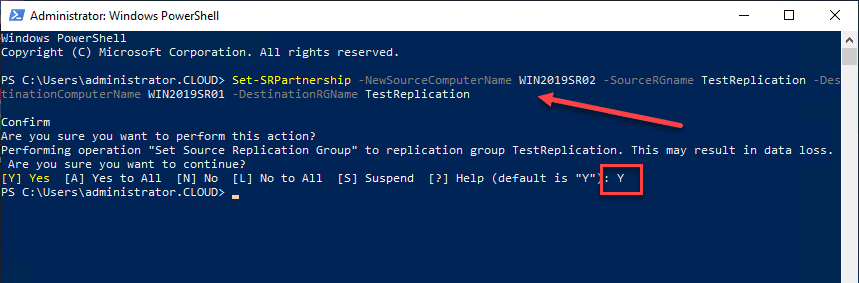

Use PowerShell to Failover Storage Replica

PowerShell can easily be used to perform the same operation.

Set-SRPartnership -NewSourceComputerName WIN2019SR02 -SourceRGname TestReplication -DestinationComputerName WIN2019SR01 -DestinationRGName TestReplication

You will have to verify the operation. Notice the note about may result in data loss.

Wrapping Up

The Windows Server 2019 Storage Replica Failover Process is extremely easy and amounts to changing the direction in your Storage Replica synchronization process.

This can easily be done in Windows Admin Center or PowerShell. Testing the Windows Server 2019 Storage Replica Failover Process was super easy and “just worked”. I didn’t run into any issues in the lab environment testing the process.

Google is updating how articles are shown. Don’t miss our leading home lab and tech content, written by humans, by setting Virtualization Howto as a preferred source.

This article doesn’t cover how to recover the failed node and replication after a node failure and having performed a failover. And could use a bit about using DFS Namespaces to keep data accessible under the same UNC when performing failover/failback.

I have a question. This was a great writeup by the way. I have a server to server replication running continuously. There are 4 large volumes. The replication is running good and I can switch directions without issue. If I have two servers, we will call them SERVER1 and SERVER2, changing the direction doesn’t really help me. If a user needs a file from \SERVER1testfile, it wont be available. Sure, the volume is now live on SERVER2 but DNS does not change. If I need to failover, do I need to change the direction on all volumes and change the name of SERVER2 to SERVER1 (and IP Address)? I feel like I am missing something here. There is not a lot of documentation on this. Thanks in advance.

I am in the middle of setting up a similar scenario on my network. I believe you will want to set up a DFS Namespace that you can point users to instead of pointing files directly to a server path. I am still learning this as I go but so far it appears to be working.

This is correct, create exactly the same shares on both fileserver nodes for the same folders and create a DFS Namespace folder with folder targets to both fileservers.

Configure the primary folder target with a referral priority of “First among all targets” and secondary folder target with a referral priority of “Last among all targets”. You can leave the folder targets enabled but MS documentation about Storage Replica does state that you should have the folder target to the secondary disabled. If you do disable one of the folder targets, make sure to set the client cache duration to 1 second, so that when you enable or disable folder targets the clients can immediately reconnect to the other folder target.

Have systems and users access the data via the DFS Namespace folder!

I created a PS script to be able to switch the storage replica and also made a version that enables/disables the DFS folder targets accordingly. Right now, I’m testing with having both folder targets enabled and have not run into any issues as long as referral priority is set correctly.

Although I have tested and noticed that in a disaster scenario, when the primary node has failed and a manual failover was done to the secondary node, making it the new primary node, when bringing the primary node back online again its volume will still have a driveletter/mountpoint attached. Only when performing a failover while both nodes are online will automatically attach/detach driveletters/mountpoints from the volumes, performing a failover with one node down will not detach that node’s driveletters/mounpoints as it has become unreachable. In such a DR scenario having both folder targets on the DFS Namespace folder enabled would be bad, as the secondary node holds the most recent data but bringing back the primary node with an older set of the data will mean that all users will immediately switch back to the older data (since that folder target is still preferred first among all targets in the DFS Namespace).

For such a DR case, it might than be better to disable the DFS Namespace Folder target pointing to the passive node and switching the folder targets when having performed a failover, to make sure data from the passive node can never be reached via DFS Namespace, even though its driveletter/mountpoint isn’t accessible under normal circumstances. However, I have noticed a considerable delay before clients “know” of the folder targets that were switched by enabling and disabling and have seen errors when trying to access the data after, even with the client cache duration set to 1 second.

Of course, it would then also still be possible to access the older data directly outside of DNS via the UNC path to the fileserver node and fileshare, even though that’s not the UNC path you instructed systems and users to use to access the data. So in this DR case when bringing back the previously primary node, it would be important to first manually remove the share to its volume before activating its network card and afterwards restoring synchronization by resetting the synchronization (using PowerShell module Set-SRPartnership), from the new primary node to the restored node. Recreating the share and finally reversing the synchronization if needed to have the restored node be the primary once again.