Create isolated test environment same ips and subnet with VMware

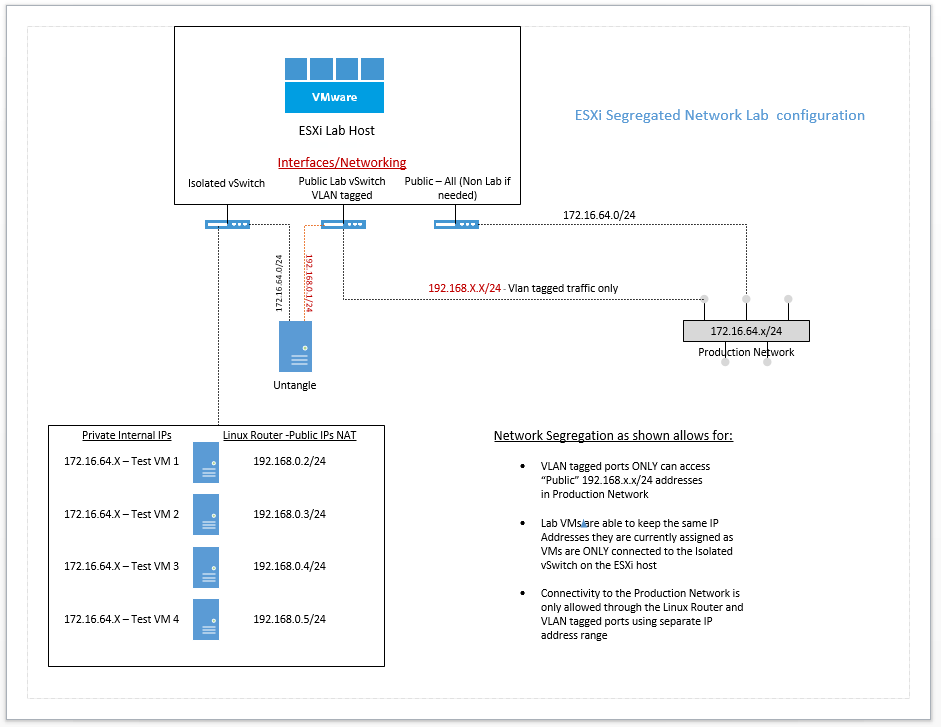

Recently, I had the need to setup a test/development environment that met the requirements of being able to keep the same IPs, subnet, and machine names as the production environment and still be able to get to these test machines from the production network. The test environment will simply consist of one VMware 5.5 host with a healthy amount of storage, RAM and CPUs. There are a lot of different design ideas out there as you can find on the Internet that will work equally as well. However, my objective for this project was straightforward and I wanted to accomplish the design objective without complicating things too much. As you will see below, the design objectives are to keep the cloned test/development VMs as close to the production VMs as possible.

Create isolated test environment same ips and subnet with VMware

Concise list of requirements: Project – Create isolated test environment same ips and subnet with VMware

- Machines will be cloned or converted copies of prodution physical and VM guests

- Will have same IPs/subnet and same network card configuration

- Computer names will remain the same.

- Will need to be accessible from the production network by developers and engineers

- All of the above needs to be accomplished obviously without conflict with production resources and with a measure of security and manageability.

- A Linux box will be used to route traffic from the segregated network environment for the lab over to the production network

- Firewall rules will be used on the linux router to make sure no unwanted traffic leaks over to the production network

Physical Network Switch configuration:

- On the physical network switch, a new VLAN was configured for Public Lab traffic

- This VLAN will carry traffic from our vSwitch2 public lab interface from the ESXi host.

- The uplink from the ESXi host is an untagged port

- Anyone needing to access the public lab addresses will need to have their uplink ports tagged with the appropriate VLAN to communicate.

VMware Host configuration

Starting with the VM host, the setup is fairly simple. Since this is basically a “reclaimed” file server, we only have 2 network ports in our host for the test/development environment. Storage is all local storage as well so we have no iSCSI traffic to worry about. For the networking configuration I have configured it as follows:

vSwitch0:

- vmnic0 is the physical adapter that is configured

- This is our Public All interface for those VMs that we may need to run on this box outside of the Lab environment

- This is also where our management interface resides

vSwitch1:

- Isolated vSwitch with no network adapters bound

- This is the isolated virtual network switch that will house our cloned production VMs with the same IP addresses

vSwitch2:

- vmnic1 is the physical adapter that is configured

- This is our Lab Public virtual switch that is physically uplinked to our Lab VLAN that was created above.

Linux Router

For the purposes of what I am trying to do, the easiest Linux router with a no brainer GUI interface is an Untangle box. You will see on many forums mention of using a Vyetta box for this purpose. In my findings however, Vyetta as a project has been abandoned. There is another No Gui commandline router that is a fork of Vyetta called VyOS. This looks to be a powerful little router that most likely could do anything you would need to do especially for this project. However, figuring out syntax and learning another router OS was not something I had the time to do. I didn’t want to spend a lot of time tinkering trying to get a working configuration for this purpose. I may revisit VyOS on a future project, but for now, I have opted to go with an Untangle box for this purpose.

On the Untangle box, I simply created a simple low resource VM with 2 NICs available. The NICs are bound to the virtual switches as follows:

- ETH0 is “External” and is bound to vSwitch2 which is used to connect to the Outside VLAN

- ETH1 is “Internal” and is bound to vSwitch1 which is the Isolated network with no interfaces on the ESXi host.

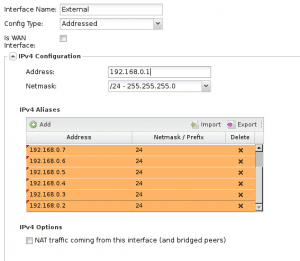

On the External Address configuration, I unchecked “is WAN Interface” and also configured the base address of my External “Public” Interface establishing the base address scheme ones will use to connect to the lab environment. Then, I assigned a list of aliases to the External interface so that we can do some Nat’ing or port forwarding to specific internal IPs based on which address on the outside is connected to.

You will notice below, I have set a 192.168.0.1 base address for the external interface. Then the IPv4 Aliases are used to give more specific “outside” IP addresses to connect to more specific internal machines.

On the Internal interface on the Untangle box, I have simply used the address of the production router that is already configured on the guest VMs cloned from production. This way, there is no reconfiguration that is required for the test/development VMs. They will still think they are seeing the production gateway, all the while it being our Internal interface on the Untangle box.

Firewall Rules

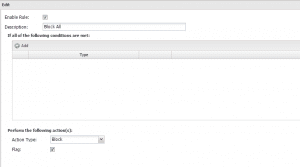

For Untangle firewall configuration, I simply used a block all firewall rule at the bottom of the rules which should be the catch all rule to prevent ANY undefined traffic from leaking between the lab environment and the production environment. The block all rule looks like the below:

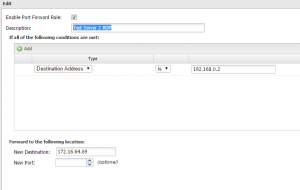

Then we can setup some port forward rules as follows for needed services such as RDP:

We simply port forward traffic coming to a predetermined outside alias address into the “Internal” address of our lab environment on the Internal side of the Untangle box.

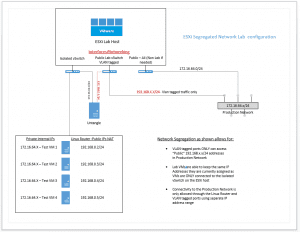

Configuration layout for conceptualizing:

Final Thoughts,

Hopefully this post helps you to create isolated test environment same ips and subnet with VMware environments. Take a look at an updated layer 3 design below.

Layer 3 Design – Overlapping Subnets Lab Environment